|

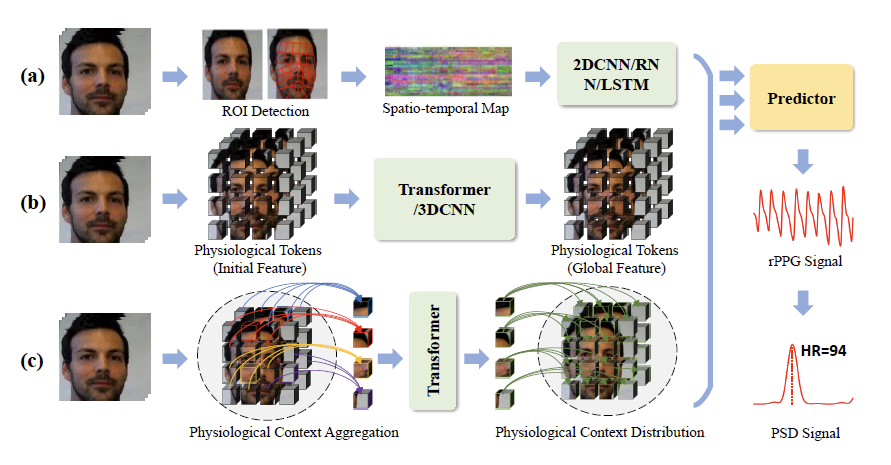

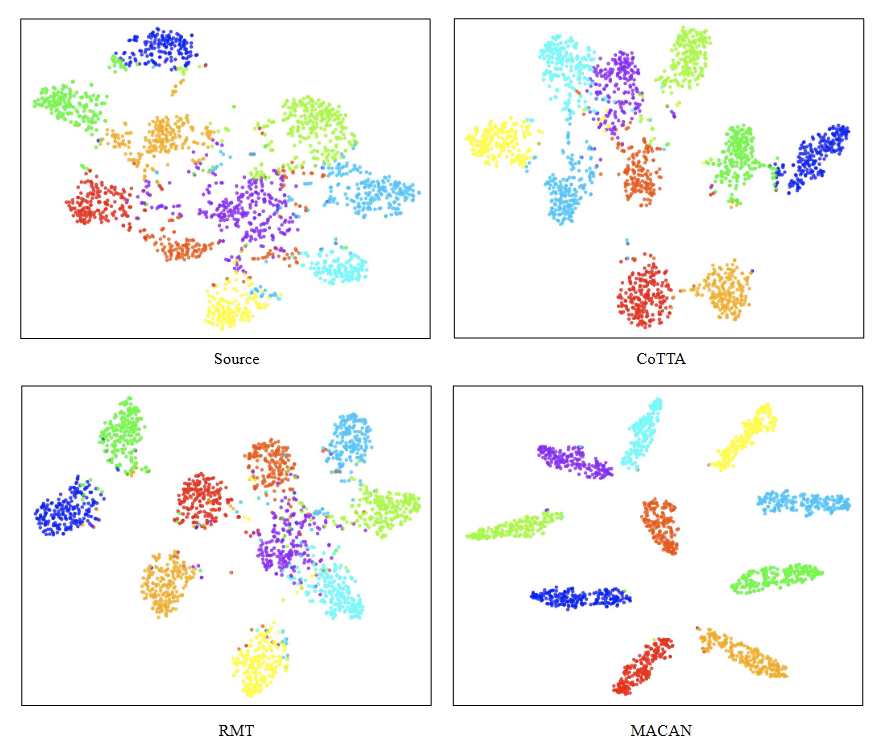

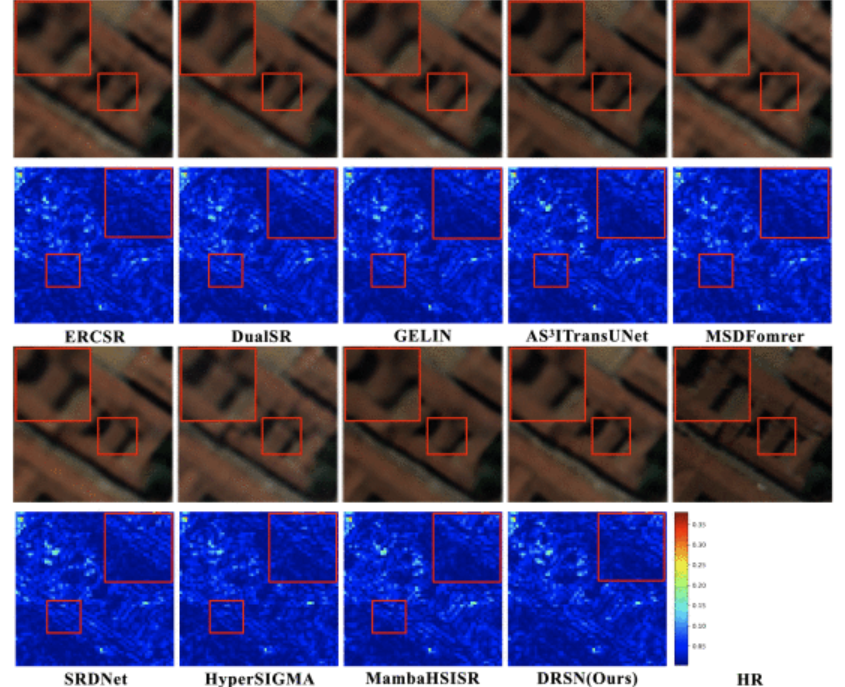

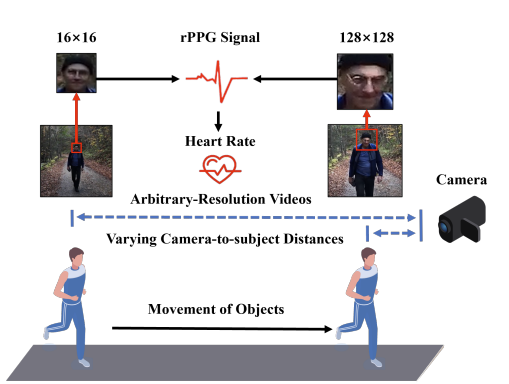

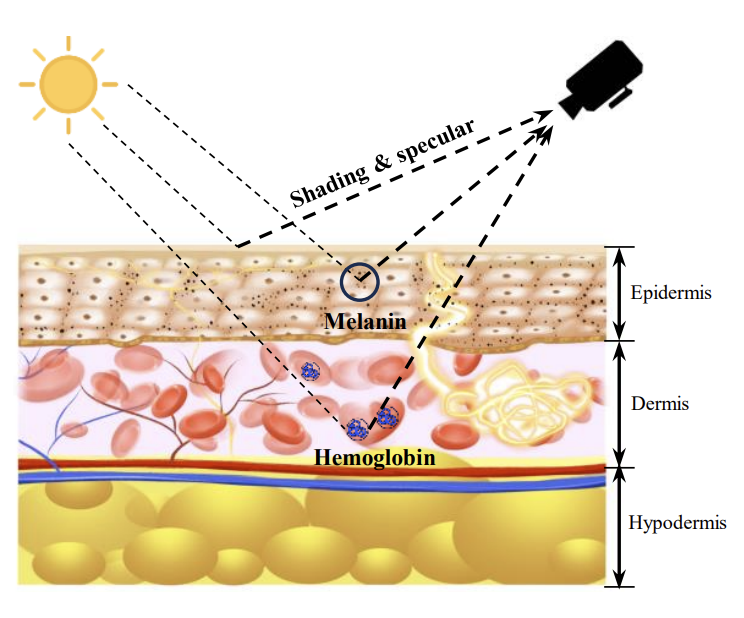

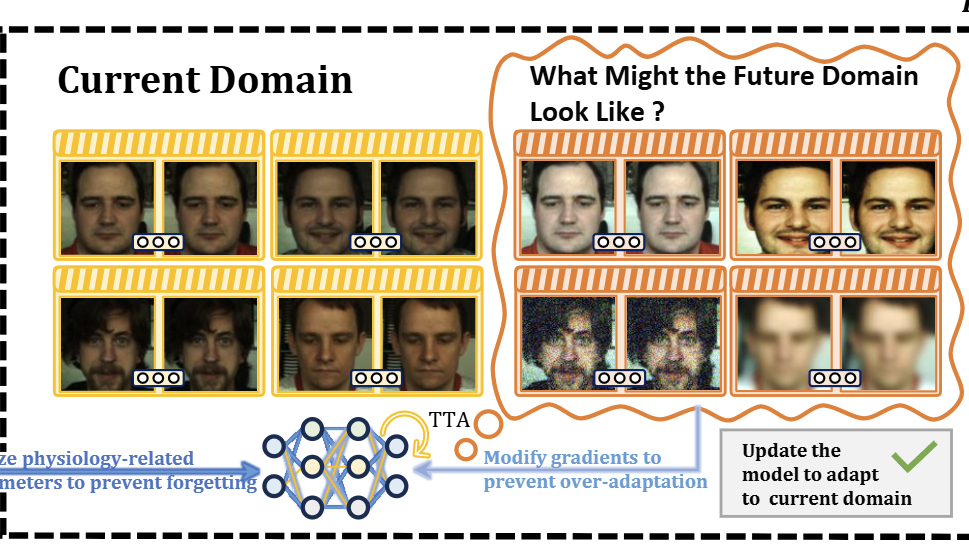

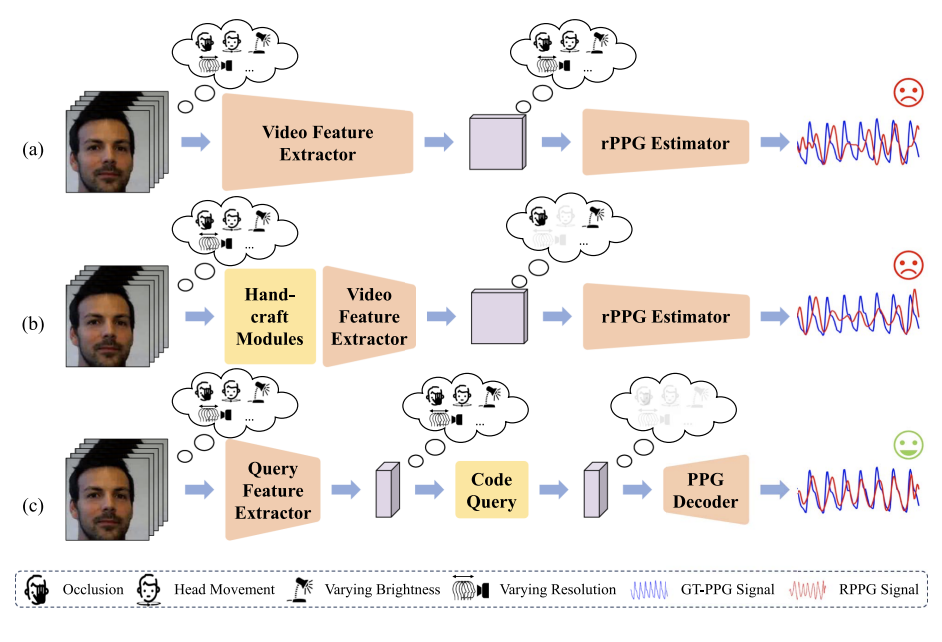

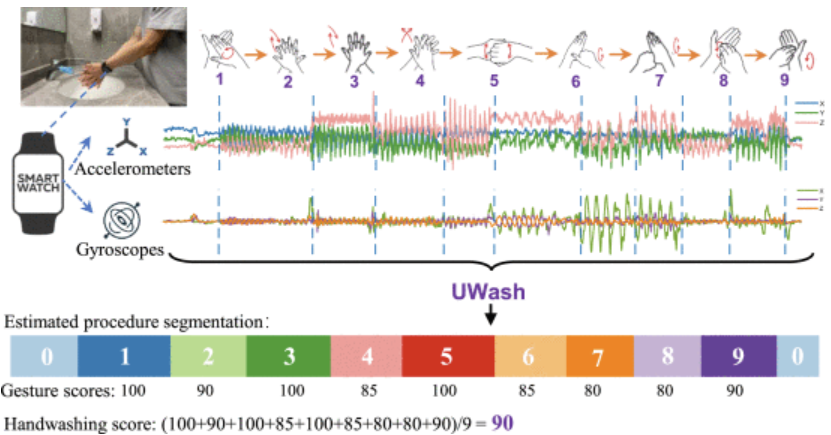

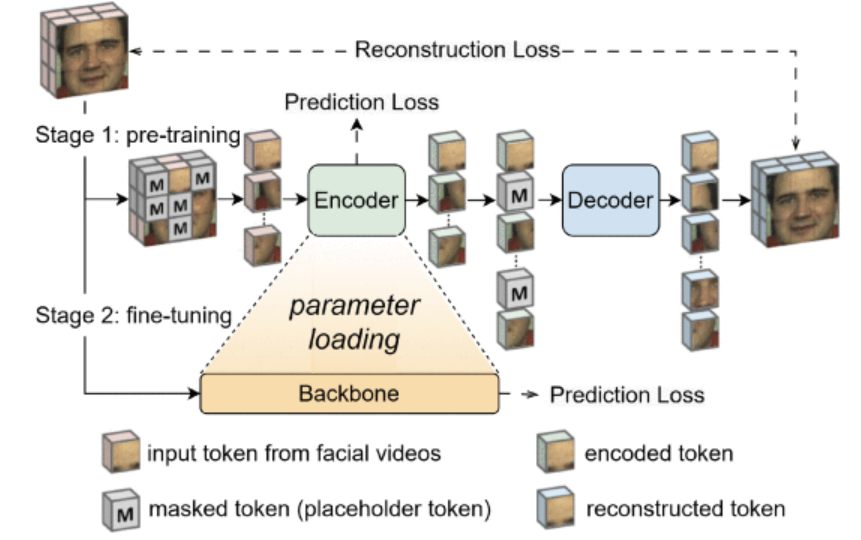

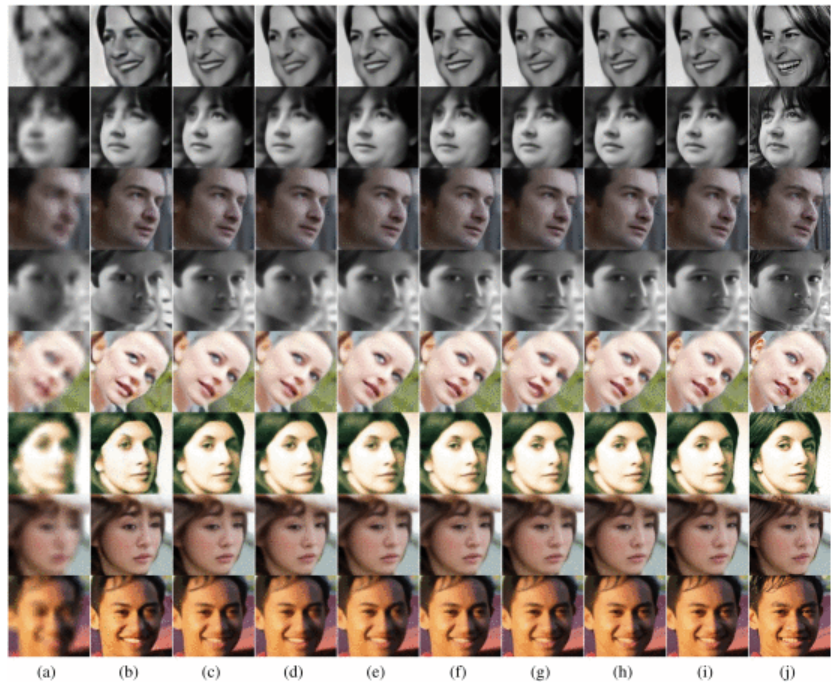

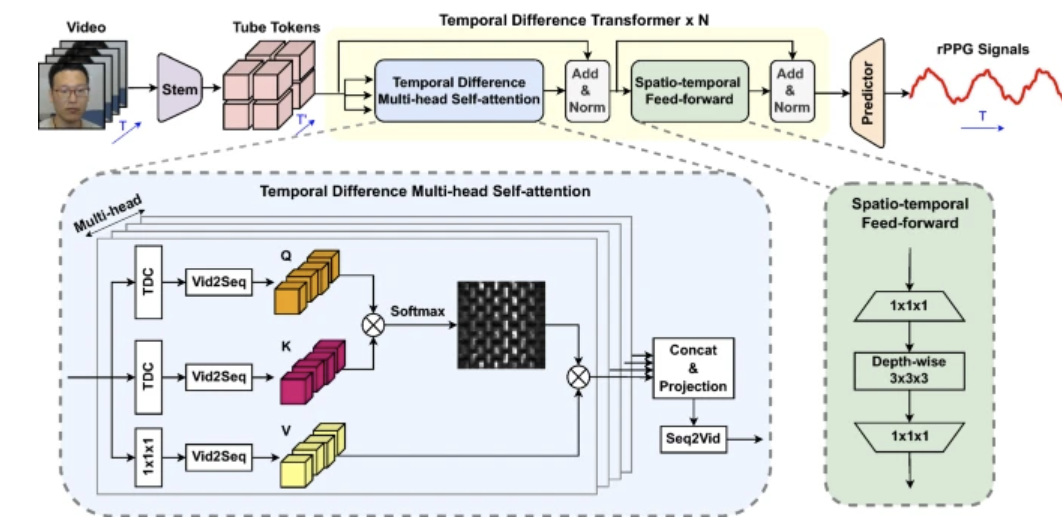

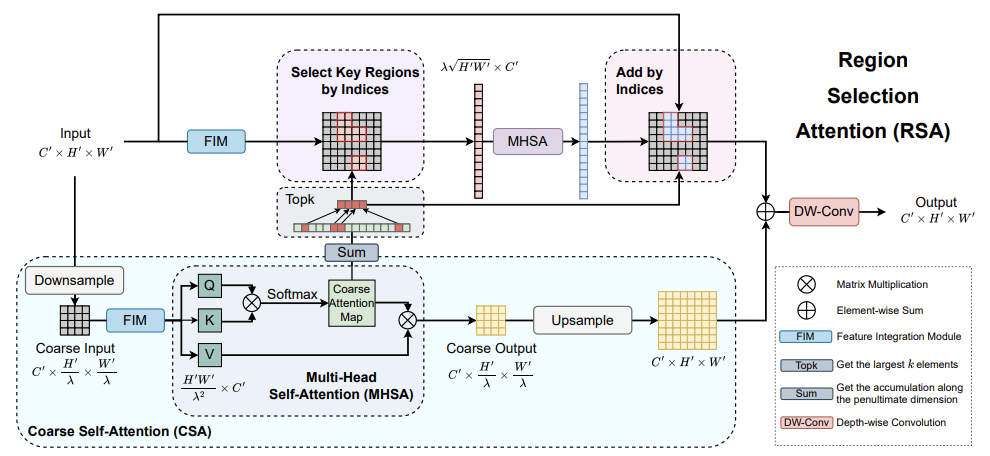

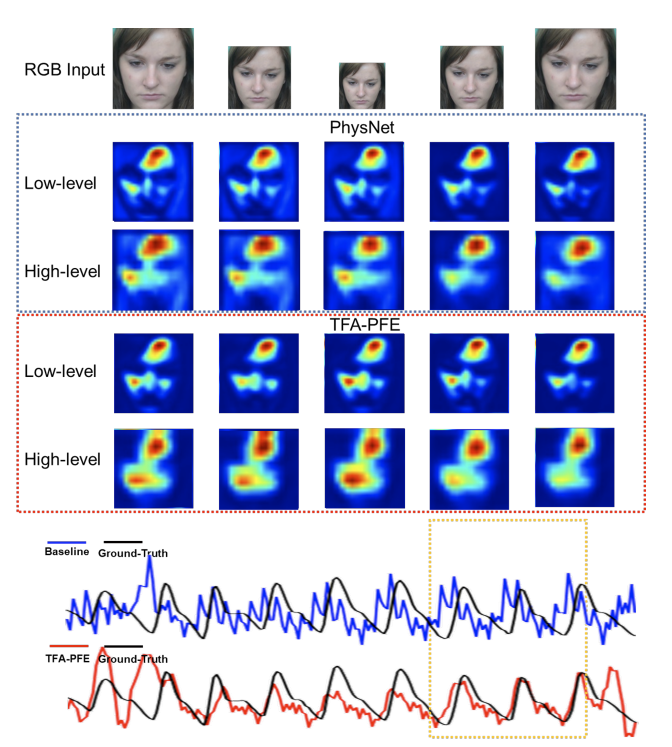

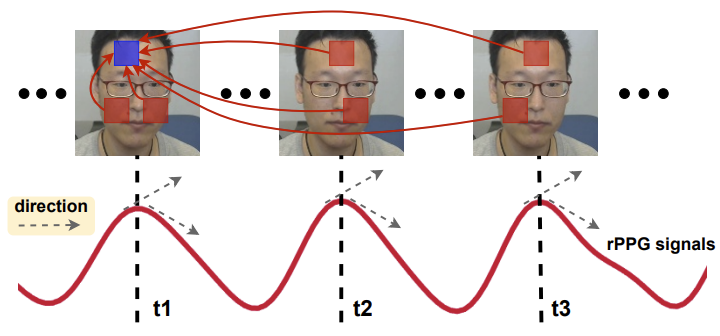

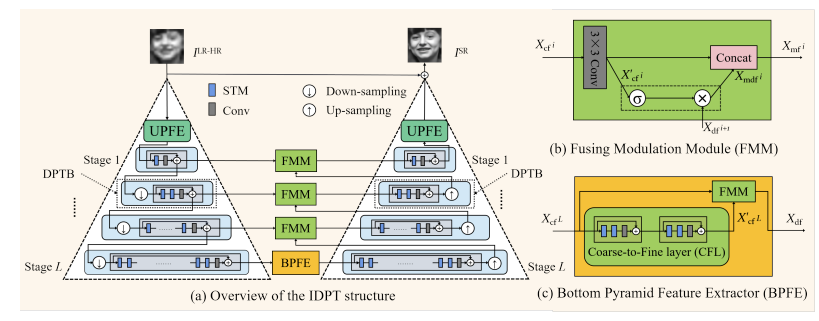

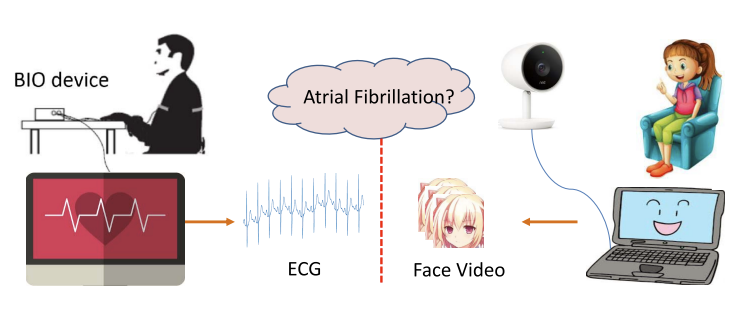

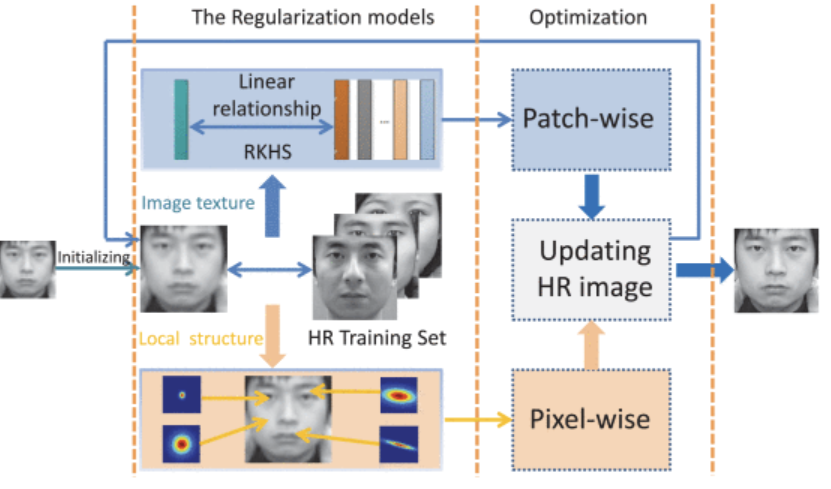

Jingang Shi | Xi'an Jiaotong University I am now a Associate Professor at the School of Software, Xi'an Jiaotong University. Before that, I conducted Postdoc research in CMVS, University of Oulu, where I was advised by Academy Professor Guoying Zhao. During my Postdoc research, I visited Multimedia and Human Understanding Group (MHUG),University of Trento, Italy . Prior to that, I received the B.E. degree and Ph.D. degree from from Xi'an Jiaotong University , China. 入选西安交通大学青年拔尖人才计划,小米青年学者. My research interests include Machine Learning, Human Behaviour Analysis, Emotion AI and Adversarial Learning. I strongly believe we are here, on this planet, time, and realm to experience, learn and grow, which gives me a huge passion about developing myself in a lifetime. My research focuses on advancing multimedia computing through the integration of deep learning, computer vision, and human-centered AI, which mainly contains high-fidelity multimedia restoration, multimodal affective computing, and remote physiological signal monitoring. The work on high-fidelity multimedia restoration addresses real-world degradation models to restore high-fidelity visual content, with applications in digital multimedia preservation, medical imaging, and intelligent surveillance. The research of affective computing develops multimodal emotion recognition frameworks that leverage visual, audio, and physiological signals to enable adaptive human-computer interaction. In addition, the research on remote physiological signal monitoring explores non-contact measurement of vital signs from facial videos under challenging environmental conditions, aiming to enhance healthcare accessibility in daily wellness scenarios. Together, these areas represent a unified effort to design intelligent multimedia systems capable of understanding, restoring, and interpreting complex real-world human-centered data. Attention: We are long-term recruiting self-motivated students to join my research team, focusing on Hybrid Intelligence. Please email me with your CV.

|

|